Making self-driving cars safer through keener robot perception

PhD student Heng Yang is developing algorithms to help driverless vehicles quickly and accurately assess their surroundings.

Aviation became a reality in the early 20th century, but it took 20 years before the proper safety precautions enabled widespread adoption of air travel. Today, the future of fully autonomous vehicles is similarly cloudy, due in large part to safety concerns.

To accelerate that timeline, graduate student Heng “Hank” Yang and his collaborators have developed the first set of “certifiable perception” algorithms, which could help protect the next generation of self-driving vehicles — and the vehicles they share the road with.

Though Yang is now a rising star in his field, it took many years before he decided to research robotics and autonomous systems. Raised in China’s Jiangsu province, he completed his undergraduate degree with top honors from Tsinghua University. His time in college was spent studying everything from honeybees to cell mechanics. “My curiosity drove me to study a lot of things. Over time, I started to drift more toward mechanical engineering, as it intersects with so many other fields,” says Yang.

Yang went on to pursue a master’s in mechanical engineering at MIT, where he worked on improving an ultrasound imaging system to track liver fibrosis. To reach his engineering goal, Yang decided to take a class about designing algorithms to control robots.

“The class also covered mathematical optimization, which involves adapting abstract formulas to model almost everything in the world,” says Yang. “I learned a neat solution to tie up the loose ends of my thesis. It amazed me how powerful computation can be toward optimizing design. From there, I knew it was the right field for me to explore next.”

Algorithms for certified accuracy

Yang is now a graduate student in the Laboratory for Information and Decision Systems (LIDS), where he works with Luca Carlone, the Leonardo Career Development Associate Professor in Engineering, on the challenge of certifiable perception. When robots sense their surroundings, they must use algorithms to make estimations about the environment and their location. “But these perception algorithms are designed to be fast, with little guarantee of whether the robot has succeeded in gaining a correct understanding of its surroundings,” says Yang. “That’s one of the biggest existing problems. Our lab is working to design ‘certified’ algorithms that can tell you if these estimations are correct.”

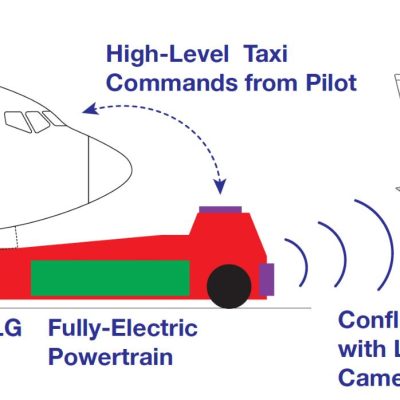

For example, robot perception begins with the robot capturing an image, such as a self-driving car taking a snapshot of an approaching car. The image goes through a machine-learning system called a neural network, which generates key points within the image about the approaching car’s mirrors, wheels, doors, etc. From there, lines are drawn that seek to trace the detected keypoints on the 2D car image to the labeled 3D keypoints in a 3D car model. “We must then solve an optimization problem to rotate and translate the 3D model to align with the key points on the image,” Yang says. “This 3D model will help the robot understand the real-world environment.”

Each traced line must be analyzed to see if it has created a correct match. Since there are many key points that could be matched incorrectly (for example, the neural network could mistakenly recognize a mirror as a door handle), this problem is “non-convex” and hard to solve. Yang says that his team’s algorithm, which won the Best Paper Award in Robot Vision at the International Conference on Robotics and Automation (ICRA), smooths the non-convex problem to become convex, and finds successful matches. “If the match isn’t correct, our algorithm will know how to continue trying until it finds the best solution, known as the global minimum. A certificate is given when there are no better solutions,” he explains.

“These certifiable algorithms have a huge potential impact, because tools like self-driving cars must be robust and trustworthy. Our goal is to make it so a driver will receive an alert to take over the steering wheel if the perception system has failed.”

Adapting their model to different cars

When matching the 2D image with the 3D model, one assumption is that the 3D model will align with the identified type of car. But what happens if the imaged car has a shape that the robot has never seen in its library? “We now need to both estimate the position of the car and reconstruct the shape of the model,” says Yang.

The team has figured out a way to navigate around this challenge. The 3D model gets morphed to match the 2D image by undergoing a linear combination of previously identified vehicles. For example, the model could shift from being an Audi to a Hyundai as it registers the correct build of the actual car. Identifying the approaching car’s dimensions is key to preventing collisions. This work earned Yang and his team a Best Paper Award Finalist at the Robotics: Science and Systems (RSS) Conference, where Yang was also named an RSS Pioneer.

In addition to presenting at international conferences, Yang enjoys discussing and sharing his research with the general public. He recently shared his work on certifiable perception during MIT’s first research SLAM public showcase. He also co-organized the first virtual LIDS student conference alongside industry leaders. His favorite talks focused on ways to combine theory and practice, such as Kimon Drakopoulos’ use of AI algorithms to guide how to allocate Greece’s Covid-19 testing resources. “Something that stuck with me was how he really emphasized what these rigorous analytical tools can do to benefit social good,” says Yang.

Yang plans to continue researching challenging problems that address safe and trustworthy autonomy by pursuing a career in academia. His dream of becoming a professor is also fueled by his love of mentoring others, which he enjoys doing in Carlone’s lab. He hopes his future work will lead to more discoveries that will work to protect people’s lives. “I think many are realizing that the existing set of solutions we have to promote human safety are not sufficient,” says Yang. “In order to achieve trustworthy autonomy, it is time for us to embrace a diverse set of tools to design the next generation of safe perception algorithms.”

“There must always be a failsafe, since none of our human-made systems can be perfect. I believe it will take the power of both rigorous theory and computation to revolutionize what we can successfully unveil to the public.”